Developmental and diverse feedback: Helping first-year learners to transition into higher education

Educator Erin Smith provides an overview of the feedback in this subject.

Summary

In this first-year medieval history subject, educators provide learners with a range of diverse assessment tasks and feedback. The feedback design is structured to help first-year learners to transition into higher education, and incrementally build up their content knowledge and skills around seeking and using feedback. Specific aspects of this case study include:

- Assessment tasks and related feedback moments which are spread across the semester, rather than being presented only at the middle and end of semester;

- A diverse range of assessment tasks and feedback types, including self-feedback, automated feedback, peer feedback, and face-to-face feedback;

- Personalised feedback, made possible by educators who worked hard to build relationships with learners; and

- Educators who help learners to build their confidence and knowledge around how to seek and use feedback.

Click to download a printable version of this case study.

Keywords

iterative feedback; personalised feedback; first-year transition; learner engagement; diversity of feedback

The case

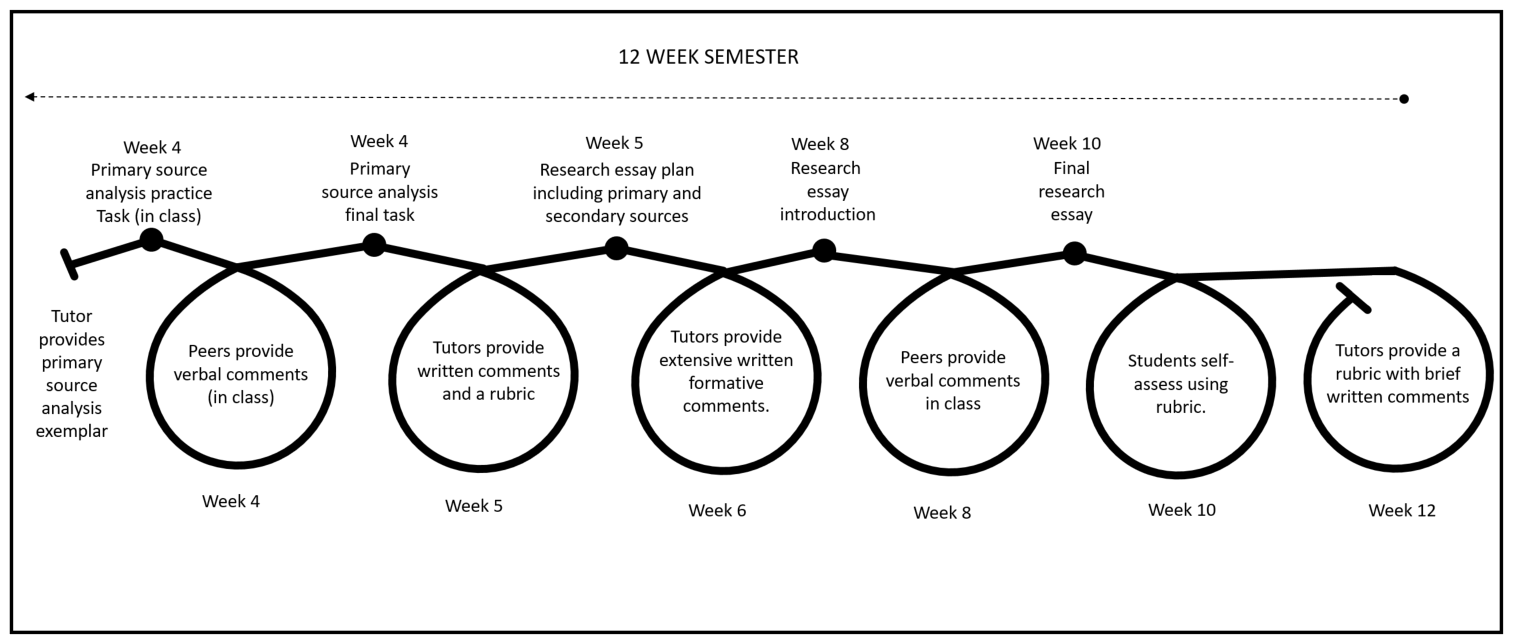

This first-year subject is delivered in Semester 1, and is often one of the first subjects that History students undertake at university. With this in mind, the educator-in-charge purposefully designed the assessment and feedback structure to help learners develop the skills necessary for first year (and beyond) in an iterative manner. Learners we spoke with appreciated this approach, stating “…it’s a change from high school, you know? And you’re just starting out. So that was really helpful for me…because the teacher provided a lot of help…it just taught me how to write, which you don’t really get in high school.” The incrementally developmental nature of the feedback design becomes evident when looking at the range of assessment types offered in this subject (see inset). For example, the first formal assessment task (analysis of a primary source) is designed to gently extend learners’ skills beyond those they have acquired in secondary school. The educator-in-charge explains that, before completing the formal task, learners attempt a practice analysis in their tutorial and then swap work with their peers to receive and provide performance-related comments. Following this, learners complete and submit their formal analysis of a primary source and receive their educator’s feedback comments. For their next formal piece of assessment, learners complete an essay plan, which builds upon their work in the primary source analysis. Educators then provide extensive formative comments on the essay plan, which learners can use to complete the next formal assessment piece – the research essay. The diagram below shows the linked nature of a selection of written assessment tasks in this subject, including the first three formal tasks (i.e., the final primary source analysis task, research essay plan, and the final research essay) and the optional minor tasks (i.e., the primary source practice task and the research essay introduction). At each stage, learners receive performance information that they can act upon in a subsequent task. Learners who complete all five of these tasks receive a significant amount of useful feedback by the completion of the final research essay. The educator-in-charge for this subject believes that the comments provided on the essay plan are particularly effective

because of the depth of feedback that [learners] get. They don’t get a rubric…they get actual written, thorough, full comments on every aspect of the plan that are targeted to the rubric for the essay overall. And [learners] love it…almost without exception [they do] five or plus points better in the final essay than in the plan, which is really heartening. After submitting the final essay, learners receive summative comments from their educators, thus closing the feedback loop for the series of analytical tasks.

Series of feedback loops for tasks relating to analysis of primary and secondary sources.[/caption] We spoke with three learners from this subject, and each acknowledged the positive impact of the feedback design. One stated that for the primary source analysis the cohort “…were given really clear instructions, so we knew exactly what we were doing…I think that was the first assessment I had [at university] and that was just really good, because that helped me out with all the [other] subjects I was doing.” Another learner revealed that their educators’ comments had helped them in a subsequent subject in the next semester, “I did [another History subject] the second semester [and I] already had an idea of how to write my essay from [the Medieval Europe subject]. I didn’t struggle with it as much, because I’d already, sort of, learnt a bit more of the skills that I needed, especially when it comes to History, because every essay’s so different.” It should be noted that the developmental assessment and feedback design used in this subject involves a high number of assessment tasks. The educator-in-charge acknowledges that this approach could be risky, as learners may feel overwhelmed. To avoid this reaction, she suggests introducing each assessment task individually, explaining to learners how it extends their skills from the previous task and prepares them for a subsequent task. For example, when learners receive comments from their educators on the primary source analysis, it is made explicit that they should try to use these comments to improve their research essay later in the semester. Learners are also advised that, while many of the assessment tasks are optional and they will not be penalised for failing to complete these tasks, completion could give them an advantage in the formal tasks. An educator remarked that learners who did engage in these optional tasks tended to perform better in the formal assessment tasks, “perhaps because they’ve taken that little step further [and] become a little bit more independent”. The inclusion of so many assessment tasks means that learners need to receive comments on their performance in time to improve their next piece of work. One way the educator-in-charge manages this without overloading the teaching team is by designing tasks that allow learners to obtain feedback from a variety of sources, such as peers, themselves (through a self-assessment rubric and reflective writing tasks), automated online sources, and librarians. This approach also helps learners develop strategies for seeking feedback themselves, as noted by the educator-in-charge:

if I can give a handful of [learners] that knowledge, then they’re on the first rung of the ladder. So the whole design of the [subject] came from that aim, to give [learners] actually what they need to succeed rather than just expecting them to divine it by osmosis…

Educators in this subject also make an effort to get to know their learners on a personal level. This approach to creating feedback means that they need to pay attention to each learner’s individual strengths and weaknesses during class time, and also when marking assessment tasks. Although this approach involves investment from educators, it helps learners feel more engaged and motivated to achieve in the subject. For example, one learner we spoke with told us:

I just felt really comfortable with my [educator] and I really believed in her ability to show me what I really needed to do. I think it does help when you have a good relationship with your [educators] to keep up, like, a higher standard of your work…it made you want to work harder for that main purpose, because you were enjoying your [educator/learner] relationship a lot more, and because they were passionate, you were getting passionate.

Why it worked

The design

In this case, feedback was considered to be successful particularly because of the following key elements:

- Timing and frequency of assessment and feedback opportunities: assessment tasks and feedback moments are frequent, but they are also well-spaced and clearly signposted, to avoid learners becoming overwhelmed. Feedback moments that are designed to assist learners with their major assessment tasks are also structured to occur within almost every tutorial.

- Explicit connections between feedback and assessment tasks: the subject’s assessment and feedback design provides learners with many opportunities to show how they could improve their performance. One learner remarked, “[completing] the essay plan before the essay was really, really good and effective. I still write [essay plans] even if I don’t have them as assessments now. I’ve just come to do it and go to my [educators] anyway.”

- Diversity of assessment and feedback types: the diversity and disciplinary relevance of the assessment and feedback types fosters learner engagement in the learning activities. This approach also enhances learners’ in-class performance.

- Personalised comments from educators: educators pay attention to each learner’s individual strengths and weaknesses by attentively reading their written work and taking note of their participation in tutorials. This helps the educators to provide comments that are tailored to the individual learners.

Educator Erin Smith explains what works in this subject and why.

Enablers

Some of the enabling factors for this feedback design included:

- University policy that allowed for diversity of assessment and feedback types: while the University previously required that first-year learners complete an exam at the conclusion of every subject, a policy change allowed the educator-in-charge to redesign this subject to focus on specific disciplinary skills relating to research and analysis, rather than simply remembering facts.

- Consistency among educators: the educator-in-charge created a detailed 115-page handbook for educators to support consistency in teaching, assessment and feedback practices. This handbook details the purpose of feedback and provides advice regarding expectations for providing comments to learners. This is to ensure that all learners in the subject receive feedback of a similar high quality. Staff teaching into this subject also have a weekly meeting where they discuss potential problems with marking, which further facilitates a consistent feedback approach. This time is counted as teaching preparation.

- Teaching and Learning showcase events: the University demonstrates that they value feedback by hosting learning and teaching conference days, where educators can share their experiences and advice around feedback practices. The educator-in-charge felt inspired to make changes to the feedback design as a result of attending these events.

Challenges

Some of the challenges for this feedback design included:

- Traditional semester structure: the design seen in this case study can be restricted by a traditional twelve-week semester, as it provides limited time for learners to complete the various activities and tasks required to build their skills in an incremental manner. In addition, a twelve-week timeframe makes it challenging for educators to provide useful performance information in time for learners to demonstrate improvement on a subsequent task.

- Getting to know learners: developing relationships to the extent that personalised feedback can be provided may take a lot of time and effort from staff. A member of the teaching team we spoke with noted this, stating “I probably put way too much time and effort in”; however, she felt that the positive impact on learners made the time and effort worthwhile.

- Learners handing in work late: in order for this feedback design to work, educators need to return comments to learners in time for them to use on their next assessment task. This process can be made more difficult if learners don’t hand in their assessment tasks on time. While it is difficult to enforce learners handing in work by the stipulated due dates, they may be more motivated to do so if they are aware of how timely submission will assist them in their next piece of work.

What the literature says

Feedback is a cyclical process through which learners obtain information related to their performance and use it to improve their future work. Based on this conceptualisation, the feedback process is only completed when learners are able to demonstrate the effects of the performance information they have received. When designing feedback, it is critical that educators provide learners with opportunities to demonstrate that an effect has occurred. As shown in this case study, educators can help learners demonstrate the effects of feedback by providing performance information on an initial task, then allowing learners to enact that information by completing a follow-up task with similar learning outcomes (see Boud & Molloy, 2013 for further reading). However, while educators can do their best to design assessment tasks with linked competencies, it is also necessary that learners are sufficiently engaged and motivated to use feedback information to improve their future work. Feedback design should therefore be sensitive to likely learner characteristics for each subject. As Beaumont, O’Doherty, and Shannon (2011) point out, there can be dramatic differences between learners’ feedback experiences in secondary school and university. Learners in a first-year subject may therefore lack knowledge of how best to use performance information to enhance their future work. Poulos and Mahony (2008) suggest that teachers designing feedback for first-year learners might consider including information that assists learners in their transition to higher education. Feedback may also be more effective when educators try to engage learners through diverse and personalised feedback. Hattie and Timperley (2007) assert that learners are better able to engage with feedback when educators clarify the purpose of performance information and offer learners a diverse range of tasks with low levels of complexity. Carless (2013) also argues that the development of respect and trust between learners and educators can increase learners’ levels of receptiveness to the messages provided within performance information, thus improving the chances that this information will be enacted.

Moving forwards

Advice for educators

The participants in this case offered several suggestions for educators wishing to trial the feedback design:

- Space feedback and assessment moments: learners need multiple opportunities to demonstrate improvement, but it is useful to space assessment tasks out as much as possible so that learners are not overwhelmed by the volume of assessments.

- Tell learners why: let learners know that the assessment and feedback process is explicitly designed to help them. Learners will be more likely to engage in activities if they understand why the design is being used. Explain to learners the purpose of each task, what the educators’ response will be, and how this will help their performance on major assessment tasks.

- Take the time to develop a relationship with learners: providing personalised feedback comments is easier when educators take the time to develop a relationship with learners. To this end, it can be useful to understand learners’ motivations and discover their capabilities and personal barriers. Learners also tend to be more motivated to engage in and improve their performance when a personal relationship is established. Admittedly, this can take time; but educators may be more likely to invest this time if they recognise that doing so will improve both the quality of the assessment tasks that learners submit and learner outcomes.

Advice for institutions, schools, and faculties

This case offers several useful insights for leaders within institutions wishing to support similar feedback designs:

- Don’t assume that learners know how to seek and use feedback: one of the learners we spoke with noted, “I don’t know who to go to [for feedback]…because at university, I don’t feel like it’s offered to you.” This comment highlights that educators may need to help learners recognise the varied resources that are available to assist them with improving their work, and how best to act upon performance information.

- Encourage feedback prior to task submission, when it is most important: one learner pointed out that his educator “wasn’t allowed to read a draft for the essay, because he has to mark it”. This runs counter to effective feedback practices, as learners need to obtain comments related to their performance prior to completing tasks. If policy dictates that educators are not able to engage in feedback dialogues with learners before submission, learners need be made aware of other useful sources.

- Inspire educators through teaching and learning showcases: demonstrate to educators that feedback is valued by offering opportunities where they can learn about effective and innovative practices in feedback design.

- Rethink the traditional semester and assessment structure: consider ways to reorganise semester models, so that educators have more time to structure developmental and incremental assessment and feedback moments across the teaching period.

- Consider whether existing policy may restrict developmental approaches to feedback: the assessment and feedback design used in this subject was only able to be implemented due to a change in policy which meant that first-year learners were no longer required to complete exams at the conclusion of every subject. Consider how simple changes to policy may support developmental and disciplinarily suitable approaches to feedback.

- Provide educators with feedback time: providing learners with useful and personalised feedback takes time. It is therefore essential that both marking and feedback time are included in workload calculations for educators.

References

- Beaumont, C., O’Doherty, M., and Shannon, L. (2011). Reconceptualising assessment feedback: a key to improving student learning? Studies in Higher Education, 36, 671-687. doi:10.1080/03075071003731135

- Boud, D., and Molloy, E. (2013). Rethinking models of feedback for learning: the challenge of design. Assessment & Evaluation in Higher Education, 38, 698-712. doi:10.1080/02602938.2012.691462

- Carless, D. (2013). Trust and its role in facilitating dialogic feedback. In D. Boud and E. Molloy (Eds). Feedback in higher and professional education: Understanding it and doing it well (pp. 91-103). Abingdon, Oxon: Routledge.

- Hattie, J., and Timperley, H. (2007). The power of feedback. Review of Educational Research, 77, 81-112. doi:10.3102/003465430298487

- Poulos, A., and Mahony, M. (2008). Effectiveness of feedback: the students’ perspective. Assessment & Evaluation in Higher Education, 33, 143-154. doi:10.1080/02602930601127869