Investing in educators: Enhancing feedback practices through the development of strong tutoring teams

Educator-in-charge Ros Gleadow provides an overview of the feedback in this subject.

Summary

This case illustrates the importance of investing in tutoring staff if we are serious about enacting good feedback. Investing time in orientating learners to the purpose and process of feedback at the start of a subject of study is also important, and frequently overlooked. This case involved a writing-focused Science subject with over 600 learners, a team of twenty-five educators and a passionate educator-in-charge. The subject and its feedback processes were highly praised by learners despite perceptions of challenging learning outcomes (this subject is the only subject exclusively focusing on writing in the sciences) and a large learner cohort. Key features of this case study include:

- Development of strong teaching teams;

- Making explicit that feedback is a ‘helping’ mechanism at the start of the subject;

- Iterative and nested tasks so that feedback has an effect;

- Multiple forms and sources of feedback; and

- Group-based feedback where learners learn vicariously.

Click to download a printable version of this case study.

Keywords

learner engagement; multi-source feedback; nested tasks; educator development; vicarious learning

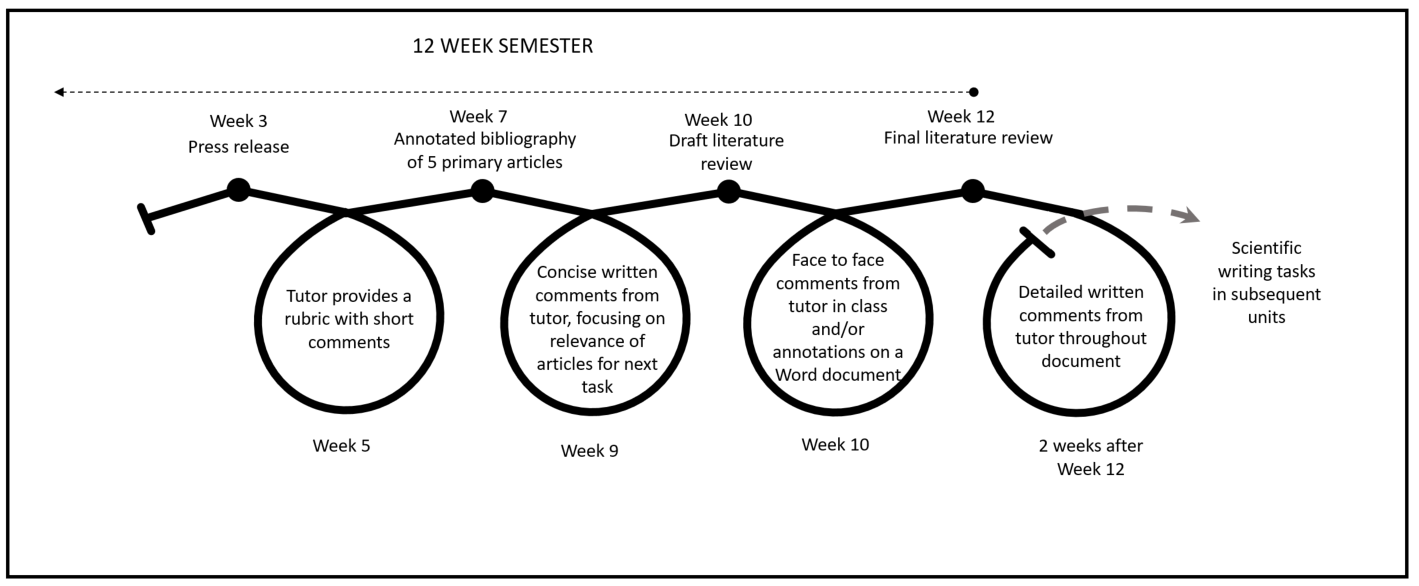

The case

This subject is a core second-year Science subject. It is the only subject which focuses exclusively on writing and communication in the Science faculty, and therefore can be a challenging learning experience for learners. The assessment in this subject is characterised by multiple tasks using multiple mediums – written essay, verbal presentation and written examination. Educators provide most of the feedback comments, and one assignment also uses peer feedback (in pairs). Feedback information on performance is offered to learners via rubrics, comments, and also informally through learner-educator email exchanges. These informal exchanges were praised highly by learners preparing for written tasks. Feedback comments on written work is returned electronically to learners, and educators are instructed to use tracked changes for the first two pages to model expected expression, grammar and argument. The same level of detail is not expected for the remainder of the assignment, and learners are cued into this expectation of early detail in feedback comments. Learners not only receive individual feedback on their own work, but are given opportunities to hear about common strengths and deficits in the cohort’s work. As an educator explained, “usually after an assessment I put up on my slides common mistakes. I think that’s effective feedback for the entire class, common mistakes and the things that they did well”. Communication of these synthesised feedback comments provides another opportunity for learners to engage with the standards of the assessment, and to perhaps gain comfort from finding that other members of the cohort also have room for improvement in their performance. Educators are not the sole providers of comments on work. Learners are paired in the subject, and are asked to evaluate the work of their partner. Not only does this peer feedback act as an extra loop of performance information (to enable work refinement), it also encourages the peer assessor to engage in the standards of work (criteria). In this way, peer feedback can help to develop learner skills in evaluative judgement, which is seen an important skill set for work beyond this subject. The largest assessment task is a literature review, and this is broken into four smaller iterative tasks in order to foreground the role of feedback information in improving work. The educator-in-charge purposefully named these smaller tasks 1A, 1B, 1C and 1D to reinforce the importance of feedback comments in building quality over a series of iterations. This iterative design aims to demonstrate to learners the value of feedback in producing better work, by making the implementation of feedback in subsequent tasks visible to the learner. Learners are also given freedom to choose their own topic for the literature review assignment, and this is seen to encourage learner engagement with the work across the assignment’s four stages.

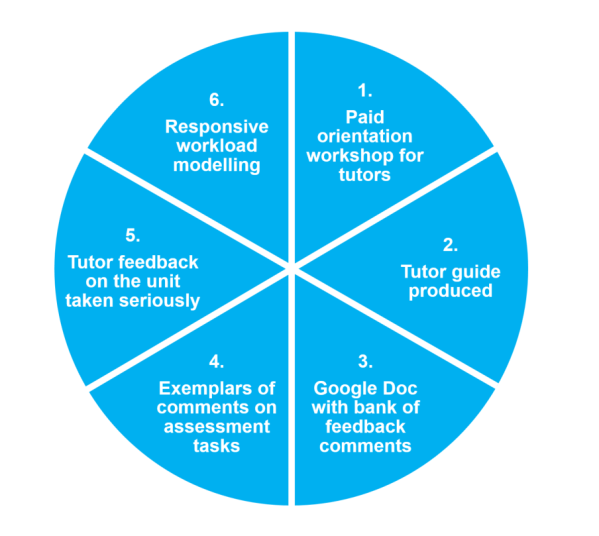

When interviewed, the educator-in-charge and educators for this subject discussed the importance of making the value of feedback explicit to learners – not only to assist their understandings of feedback, but to assist their development as writers. As the educator-in-charge explains, “from the beginning we saw it as very important that [learners] actually had the opportunity to develop their writing; [not just to] assess whether they could write or not, but … to actually teach them to write”. Educators for the subject described that they use feedback to show that they are invested in the learners. Educators make explicit their intention to help learners through feedback at the beginning of the subject, and ask learners for a return in effort to complete the loop. One educator we spoke with indicated that they articulate their own feedback philosophy to learners: that feedback is not ‘done to learners’ but is rather a process offered to learners, for learners, so that they themselves can migrate towards mastery. As the educator explains, “Feedback is about how [learners] can make themselves better”. The learners we interviewed also indicated that this concept of feedback as a helping mechanism is made explicit to learners – both in the introduction to the subject given by educators, which outlines their plan for the subject, and also through the very construction of feedback comments. As a learner explains, “the way they give feedback … I don’t know how it could be seen as discouraging to anyone … for me it was very encouraging.” The same learner also reflected the tutors’ feedback intentions in their own description of feedback: “feedback is like knowing, ok, I did this wrong, that wrong, I didn’t understand this question the way they wanted me to so I would change it the next time around”. This subject has a teaching team of twenty-five educators, overseen by a highly-invested educator-in-charge. The educator-in-charge has been in the role for seven years and feels that, along with designing, teaching and refining the subject, they have a key role in coaching educators to engage in consistent and high-quality feedback practices. This coaching takes the form of an intensive training workshop for educators at the beginning of the year, and weekly teaching team meetings. As the educator-in-charge explains, “in that weekly meeting, when assignments are due, we say, ‘these are the types of things you’ll see, this is how [educators] have done it from previous years, so I find that you need to do this’”. Educators are also provided with an educator guide and a comment bank, which offers example feedback comments to clearly communicate expectations for level of detail and tone. Additionally, educators participate in a cross-marking exercise that offers a chance to compare and discuss feedback approaches. All training activities and meetings are paid. The diagram below illustrates the processes used to engage educators in provision of feedback comments within the subject.

Why it worked

The design

In this case, feedback was considered to be successful because of the following key elements:

- Learners are orientated to the purpose of feedback at the start of the subject: educators in this subject acknowledge that effective feedback design involves orientation of learners to what feedback is, and why it is important for learners. Educators do not take for granted that learners share educators’ understandings of feedback (that is, that feedback is more than comments on work). This sort of verbal orientation should not be reserved for day one, year one of courses; rather, learners are likely to benefit from engaging in ongoing discussions throughout their course about feedback mechanisms that are designed to improve their performance both on and beyond tasks.

- Tasks are nested and iterative: assessment tasks are designed in such a way that learners are challenged to meet more difficult learning outcomes over time. The tasks also contain overlapping competencies, so that learners have an opportunity to put into practice new strategies they have gained through engaging with peer and educator evaluative processes. Educators explicitly cue learners into nested tasks by naming assignments as a series (1A, 1B, 1C, 1D).

- Multi-source feedback: in addition to educators providing evaluations on the quality of learners’ work, learners are asked to evaluate the work of their peers. The peer evaluations are supported by guidelines for peer review. Learners act as both the provider and the receiver of performance information, and peer feedback thus generates learners’ engagement with standards of work and develops capabilities of evaluative judgement – both of which are important for future work.

Educators Ros Gleadow and Lisa Kass explain what works in this subject and why.

Enablers

Some of the enabling factors for this feedback design include:

- Educators are valued and developed: this subject invests in its teaching team. Educators are provided with annual training, and are paid for preparatory meetings and marking calibration exercises. Educators are also provided with resources to support their teaching and feedback practices. These measures help educators to feel valued as important members of the teaching team.

- Educator and learner feedback about the subject is acted upon: the educator-in-charge welcomes suggestions from educators on how the subject may be improved. Together with learner evaluations, this feedback has been used to refine the subject –demonstrating firsthand to educators and learners that their feedback has an effect, and is not merely a mandated process to satisfy policymakers.

- Learners have autonomy to select assignment topics: allowing learners to choose a topic of interest for their major assignment (the literature review) was seen to enhance their continued engagement in the staged tasks. Genuine interest in the work may help to foster learners’ genuine interest in the feedback processes designed to help improve those pieces of work.

Challenges

Some of the challenges for this feedback design include:

- Institutional conceptions about the role of ‘markers’: the educator-in-charge has worked hard to generate workload models which support educators’ work beyond direct marking tasks. For instance, the educator-in-charge has shown initiative in cancelling a mid-week tutorial within the semester, to allow for educators to spend more time on important feedback processes. However, the educator-in-charge had been in the role for seven years, and had already received excellent subject evaluation scores, before making this bold change. A less-experienced educator-in-charge may not have the confidence to undertake this nimble reprioritisation.

- Expectations of turnaround time of ‘informal feedback’: one of the features of this subject is the invitation to learners to directly email their educator with any concerns regarding either content or their own work in building towards assignment submission. Learners we interviewed praised this email exchange as a feedback mechanism, but also reported that, at times, they were frustrated by delays from educators in responding. It may be important to set realistic expectations for learners around turnaround time for informal exchanges.

- Peer feedback can be seen as the poor cousin to educator feedback: learners we spoke with reported that they privilege educator-generated feedback comments over peer-generated comments. Of concern to some learners was that their peers possess the same level of knowledge (and limitations) as themselves, and therefore may be less likely to identify areas for improvement.

What the literature says

Explicit conversations with learners about the process and value of feedback are advocated in Feedback Mark 2 (Boud & Molloy, 2012), and explicit orientation to the purpose of feedback in fact constitutes the first items on the ‘feedback quality instrument’ developed by Johnson and colleagues (2016). Carless (2006) also highlights that learners and teachers often have different views on what feedback is, and argues that there is merit in engaging in conversations to broaden conceptions of feedback early in programmes. The assessment tasks in this case study were designed to have overlapping qualities throughout the semester, and this design is recommended by Boud and Molloy (2012) in the model ‘Feedback Mark 1’. This means that, although the assessment task may take the form of different mediums (for example, a written assignment followed by an oral presentation), there are overlapping competencies between tasks. This ‘nesting of tasks’ enables the learner to translate new learning strategies, gleaned through engagement in performance comments, into a subsequent task – thus closing the loop. Learners have reported reservations about the value of peer feedback for their learning. Learner-based concern about the ‘blind leading the blind’ was reported in Tai, Canny, Haines, and Molloy’s (2016) study on peer feedback in medical education. The accuracy of peer assessment may be improved by the provision of frameworks or guidelines that orientate the learners to the standards of work (Stegmann, Pilz, Siebeck, & Fischer, 2012). Nicol (2014) provides principles for maximising the value of peer-generated evaluations of learner work. Other factors to consider when designing peer feedback include the tendency for learners to make credibility judgements concerning the source of the feedback (Watling, Driessen, van der Vleuten, & Lingard, 2012), and also the extent to which trust is established between the two parties involved in the feedback exchange (Carless 2013).

Moving forwards

Advice for educators

The participants in this case offered several suggestions for educators wishing to trial the feedback design:

- Orientate learners to the purpose of feedback: it is best to commence a subject with the assumption that not all learners have an understanding of the purpose and process of feedback which is shared by educators and feedback designers. Explicitly orientating learners to the purpose of feedback – and to expectations about learners’ engagement with such processes – is important. Discussions with learners could run along the lines of the following example: “comments about work are not designed to deflate you, but rather to help you gain clarity about your own work and how it relates to the goal of the work. As much as possible, we have built in opportunities for you to attempt a related skill or piece of work that enables you to try on the new strategies for size, and hopefully you’ll see the difference in the quality of your work in the next attempt”.

- Provide educators with examples of feedback comments: educators value a ‘bank’ of phrases for making feedback comments, in order to gain a sense of the expected tone and level of detail.

- Create clear links for learners between nested tasks: explicitly demonstrating that pieces of work are linked, and that feedback comments on work are designed to build up work progressively, is important. This can be achieved through verbal orientation to tasks, such as explaining the purpose of an assessment task to learners, and even by simply signalling that smaller pieces of work form a whole assessment task through a numbering system (e.g., 1A, 1B, 1C).

- Promote learner understanding of the value of peer feedback: learners may be suspicious of peer feedback processes if they privilege the expertise of the educator over that of their peers. It may be helpful to orientate learners to the value of peer learning and feedback (e.g., lifelong competency in the workplace), and to highlight areas that peers may have the capacity to judge at this point in their education.

Advice for institutions

This case offers several useful insights for leaders within institutions wishing to support similar feedback designs:

- Recognise diverse workload models: workload modelling should be flexible and should account for the fact that the feedback process is more than simply providing comments on work. Workloads should allow for feedback practices beyond marking of submitted assessment, such as calibration and training exercises.

- Invest in, support and value educators: time spent is time saved. Invest in educator training around subject learning outcomes, assignment purposes, and the quality of feedback comments. Educators also need support in the form of educator guides, comment banks and regular meetings. It is important that educators are paid to attend all meetings and training. It is also important to take heed of educator suggestions and show how this feedback has been incorporated into subsequent subject design.

- Support peer feedback across the institution: peer feedback is an important mechanism that helps to alert learners to the value of building a picture of performance through multiple sources. Peers making judgements on a fellow learner’s work need to engage with the standards for the work in order to make an evaluative judgement. Guidelines to support these peer judgements, in the form of criteria or basic headings, may help orientate learners to these opportunities to make judgements of others’ work.

Resources

For a range of resources to support university educators in designing good assessment, visit the Assessment Design Decisions Framework website: http://www.assessmentdecisions.org For a how-to guide for peer feedback, along with other support materials visit the University of Strathclyde’s PEER Toolkit website: http://www.reap.ac.uk/PEERToolkit.aspx

References

- Boud, D., & Molloy, E. (2012). Rethinking models of feedback for learning: the challenge of design. Assessment & Evaluation in Higher Education 38(6), 698-712.

- Carless, D. (2006). Differing perceptions in the feedback process. Studies in Higher Education 31(2), 219-233. doi:10.1080/03075070600572132

- Carless, D. (2013). Trust and its role in facilitating dialogic feedback. In D. Boud and E. Molloy (Eds.), Feedback in higher and professional education (pp. 90-103). London: Routledge.

- Johnson, C., Keating, J., Boud, D., Dalton, M., Kiegaldie D, Hay, M., … Molloy, E. (2016). Identifying educator behaviours for high quality verbal feedback in health professions education: literature review and expert refinement. BMC Medical Education, 16(96). doi:10.1186/s12909-016-0613-5

- Nicol, D. (2014). Guiding principles of peer review: Unlocking learners’ evaluative skills. In C. Kreber, C. Anderson, N. Entwistle and J. McArthur (Eds.), Advances and Innovations in University Assessment and Feedback, Edinburgh: Edinburgh University Press.

- Stegmann, K., Pilz, F., Siebeck, M., & Fischer, F. (2012). Vicarious learning during simulations: Is it more effective than hands-on training? Medical Education, 46(10), 1001–1008. doi:10.1111/j.1365-2923.2012.04344.x.

- Tai, J. H.-M., Canny, B., Haines, T. & Molloy, E. (2016). The role of peer-assisted learning in building evaluative judgement: opportunities in clinical medical education. Advances in Health Science Education, 21(3), 659-676. doi:10.1007/s10459-015-9659-0

- Watling, C., Driessen, E., van der Vleuten, C., & Lingard, L. (2012). Learning from clinical work: the roles of learning cues and credibility judgements. Medical Education 46(2), 192-200. doi:10.1111/j.1365-2923.2011.04126.x